Back

- Nursery

- Prep

- Senior

- Sixth

- Home

- Contact Us

- Admissions

- Boarding

Date Posted... Jun 11th 2024

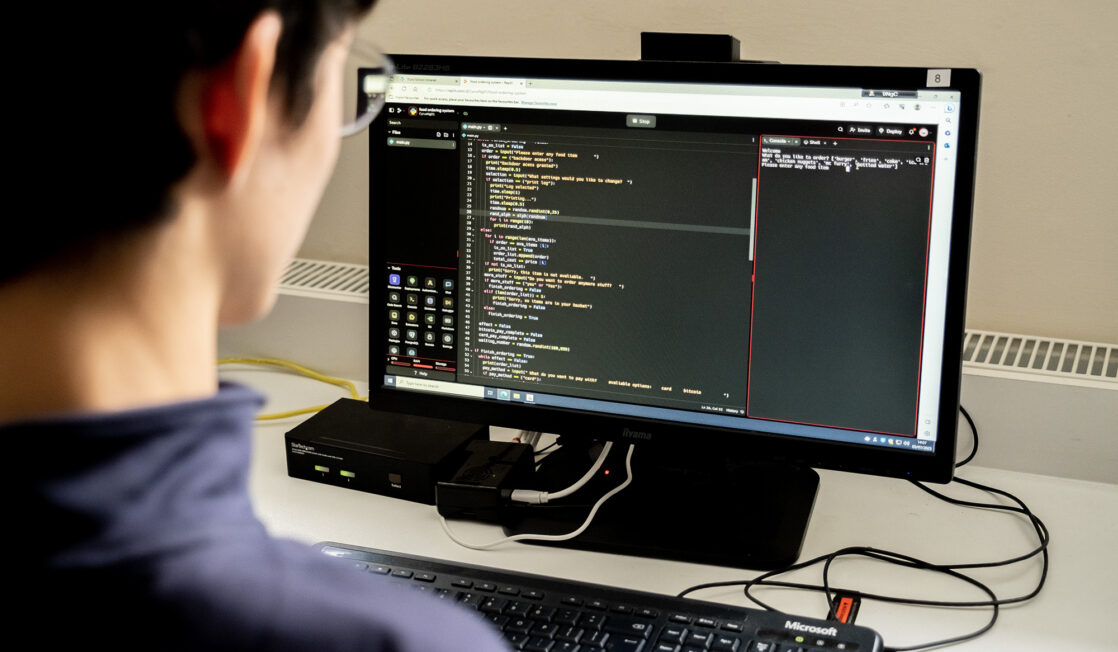

Our AI Forum meets today, to discuss advances in this arena and AI’s impact on teaching and learning. Set up over a year ago, our AI Forum is a half-termly meeting for staff to present on AI reading and research and share how they are navigating AI in the classroom.

Head of Truro School, Andy Johnson, recently wrote an article on this subject, which we share with you here. We hope it provides valuable insight into Truro School’s approach and ethos surrounding this fascinating and fast-paced topic.

Truro School welcomes the potential of AI to enhance learning. That’s alongside the necessary caution and uncertainty over how we will equip our pupils and staff to ensure the benefits outweigh the risks. Our focus as a School is and will remain on a values-based and pedagogy-driven approach to education and our relationship with AI will be guided by those principles too.

Our wider Digital Strategy looks more broadly than at AI, and it is useful to start with a distinction between technology use and AI. Technology use is not new, and new technology use is ongoing. Safety and safeguarding in these areas are longstanding and are backed up by policy in terms of everything from acceptable use policies, codes of conduct, safeguarding steers, PSHEE and GDPR. Preparing children for a digital future, including success, safety and well-being within it, is not new.

AI, however, is new. The challenge is how to mitigate its risks and harness it to enhance and transform education. We deliberately haven’t tried to put together a formalised AI Policy. This is partly because, as already stated, digital safety is already covered, but it is also because AI is so fast-moving. A fixed policy on AI now would be problematic in six months. That’s why the approach has to be driven by values and pedagogy so that it can be flexible and empowering in a context of rapid change, as well as never losing sight of safety.

Pedagogically, the test is to find ways of ensuring that AI can accelerate and deepen thinking, not be a substitute for it. Rather than preventing its use as a faster route to the same answers we could get before, we should encourage its use to move pupils to a point further along a journey of intellectual challenge or understanding. They can then be helped to think just as deeply and to greater effect. The pedagogical rule, then, is that AI must be used to provide or facilitate intellectual challenge, not steal it away. That’s why we don’t like it being used to do a pupil’s homework for them, but we welcome its use to support SEND access, or to generate different interpretations of information that a pupil can then be asked to evaluate the similarities and differences between, for example.

The same applies to teaching use as much as learner benefit too. We don’t want teachers using AI to cut corners in a way that means they know their pupils and their learning needs less well. However, we will find ways to use it to generate a more insightful understanding of patterns of thought or learning, empowering a teacher to support each pupil better. Can AI do your marking, therefore? Not with pedagogical integrity if it means you don’t understand the pupils’ needs better, but yes, if this allows for a type of wider analysis of class responses that will target sharper intervention for the benefit of all.

Here at Truro School, there is a continual and inclusive drive to consider AI’s potential to augment our educational offerings. Our half-termly AI meetings provide an open forum for discussion and, naturally, one of the biggest questions our teachers are keen to explore within this platform is how to use AI to challenge our pupils’ thinking.

One staff member recently shared how they used AI to generate responses to a series of ethical dilemmas. The pupils were then given the chance to respond to these with key scholar responses. This exercise enabled them to use deeper thinking to reach their conclusions while examining the limitations of AI-generated content.

Our sixth formers recently took part in a debate on the ethics of AI, led by our respective Heads of Psychology, Economics and Religious Studies. AI has also been a popular focus for our Sixth Form Extended Project Qualification (EPQ). Presentations have included AI’s role as a revision tool and the development of effective artificial learning programs.

We empower our staff to talk about the limitations of AI with our pupils too; how can pupils navigate their way through biased responses, inaccuracies or false information? Metacognition applies in this context as in all where we help learners become better learners. A crucial part of our teaching around AI must empower pupils to become adept at applying critical analysis to the AI outcomes and teach our pupils what AI cannot do as well as what it can do.

In such a dynamic and exciting environment, there is a considered and purposeful drive to understand AI’s potential for learning and to encourage fair and transparent use. Once we get over the scare stories about corner-cutting or cheating (best addressed via our values), the pedagogy of AI is not dissimilar to that of other new paradigm-changing technologies. Once upon a time, printed word allowed students to learn from those they couldn’t meet. Skipping the invention of radio and then television, more recently the worldwide web opened some kind of research potential to anybody with an internet-accessible device in their home or office. In all cases, the fear was that change might damage, destroy, or distract until it was realised how it could also enhance, excite, and empower.

On some level, AI is no different, although the speed of evolution is, we must admit, rather unprecedented. But that’s precisely why staying rooted in our values and with a clear sight on pedagogy matters so much.

Andy Johnson

Head of Truro School

Truro School is part of the Methodist Independent Schools Trust (MIST)

MIST Registered Office: 66 Lincoln’s Inn Fields, London WC2A 3LH

Charity No. 1142794

Company No. 7649422